Tech Talk: The storage economist

As organisations tackle decreasing budgets, the unknowns of big data and juggle return on investment (ROI) and total cost of ownership (TCO), Hitachi Data Sytems (HDS) chief economist David Merrill says traditional data storage concepts and economics need to be challenged and why IT departments need to shift from being cost centres to profit centres.

Supratim Adhikari: David, let’s start with the issue of storage and how can companies get the most out of their existing infrastructure.

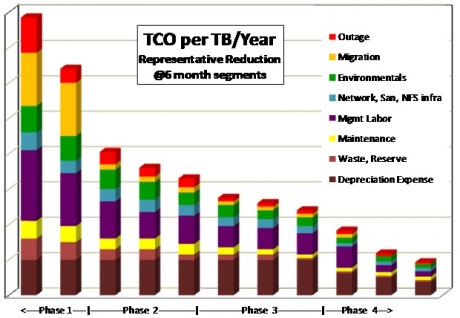

David Merrill: Essentially, there is a model that correlates money to certain levers that we know reduce costs. The following chart is somewhat of a cost reduction roadmap that shows how an organisation can reduce costs through a phased approach.

The first thing an organisation needs to do is get the basic rights and you do this through technology. An organisation can start by consolidating its data centre assets, implement disaster recovery solutions and utilise updates to improve performance and replace ageing and expensive assets. The unit reduction cost in this phase comes in at around 10 per cent, so a pretty moderate number.

The next phase looks at virtualisation, de-duplication and compression and the ability to over-provision or oversubscribe capacity. When accompanied with tiering and policy-based asset re-purposing, this phase can deliver significant unit cost reductions, anywhere between 30 to 40 per cent.

The final two phases focus on behavioural change, which has a moderate impact on unit costs but can be tricky for an organisation, and challenging and changing the norms of asset ownership and location.

So, this is a pretty basic primer to data centre economics and over time technical and operational investments will have to give way to behavioural, consumption and remote options.

SA: Given the current buzz around big data, how is the current narrative different to the days of data warehousing?

DM: I am not sure it is, except for the fact that the volume of data has increased immensely. Five or six years ago we were dealing with computer generated, database generated data but now we are dealing with social media and machine generated data. This new wave of data generators that are exploding the volume and we just can’t continue to build the storage infrastructure in the old fashioned way.

It might be similar to data warehousing but the volume, the retention period and the modality of the data cannot afford to be on the existing platforms. Traditional data storage concepts, architecture and economics all need to be fundamentally challenged.

SA: So how useful is big data to organisations and how do organisations adapt their storage architecture?

DM: The raw data in itself is meaningful in the state it is created and the analytics process allows us to discern a pattern. This turns data into information. However, this data doesn’t need to be stored indefinitely, you analyse and then you can throw it way. If you accidentally lose it you can regenerate it. So the idea of super high cost protection needs to be reconsidered. Things like Hadoop and Azure use nodal architecture for some very small level of data protection, so you don’t need to back up all the data or replicate it. It’s just being used for a specific purpose and that’s a valid model because of the volume of the data that’s being generated. But, an organisation still needs to consider how much this costs and whether it’s commensurate to the value being derived.

SA: Is there still a tendency for organisations to still store everything?

DM: That’s very much the early adopter technique and they are all getting to a point where they won’t be able to afford that model. The volume of data is making it economically unsustainable and when they don’t understand the economics around storage then they are not really managing their assets very effectively and big data doesn’t become a solution.

One of the things that have driven this sort of thinking is that cost of storage is cheap. But price of storage is just a fraction of the total costs, running about 12 to 15 per cent of the overall costs. Even if the disk was free the total cost is not free. What organisations need is a total cost framework to determine their cost sensitivity, which in turn is used to architect the best storage plans.

SA: What do organisations tend to focus on when it comes to determining cost sensitivities?

DM: Well, no two companies have the same set of priorities but generally speaking you have seven or eight repeatables like deprecation, maintenance, labour, power, cost of backup, migration and waste. What we do have now is the ability to work out what kind of technology is best suited to reducing the costs.

SA: Understanding cost effective storage solutions is one thing but I guess you still need to ask the right questions right?

DM: That’s right. You only have to look at just how over-insured organisations are with regards to data protection. They are spending an enormous sums on data protection but on the other side of the equation you have to work out what’s the risk probability of the organisations losing, corrupting or restoring data. Deducing the annualised loss expectancy can often be telling and while I would never say that backup isn’t important but there are ways to effectively balancing IT costs against a business value proposition.

SA: That would need a change in thinking from an IT leadership point of view, won’t it?

DM: What we need is IT leadership that is more sensitive to the outward facing value of IT systems. IT departments need to shift from cost centres to profit centres where CIOs and CTOs bring that business value of what technology can do to the boardroom.

If IT is just happy being a cost centre then eventually they are going to be left behind as brokers. The really provocative opportunity is for them to tell the top brass that here’s all this data, let’s harvest it and bring value into the business. So you see, from a cost centre to a profit centre.

I am not sure if a lot of CIOs and CTOs are qualified or incentivised enough to make that sort of transformation.

SA: But CIOs are making a push in the boardroom aren’t they? Or do they need to reskill?

DM: They are making a bigger push and another trend I have picked up is that in some cases a business’ chief financial officer assuming the responsibilities of a CIO. Now that’s a great outcome when it comes to helping IT turning the corner as a profit centre. The CFO may not understand all the technology but he/she might be better placed to see the value proposition. We have all the pieces in place and it’s really a perfect storm to do something with big data, analytics and the cloud.

CIOs don’t have a choice if they don’t make the push they will eventually be replaced. Now, it’s not going to happen straight away and it may be that an older generation of CIOs retires or dies before the shift takes hold. Having said that IT is going to have to reinvent itself and look at the efficiencies of bringing value into a business. Storage economics, as it stands, helps us identify measures to reduce costs but CIOs and CTOs need to take that next step to start exploring how to generate and track the revenue IT can create. That is the logical extension of reducing IT costs.