What the NBN cost-benefit review doesn't tell you

The final Cost-Benefit Analysis (CBA) report on the National Broadband Network (NBN) has generated plenty of discussion but nearly nine months after the formation of the Cost-Benefit Analysis and Review of Regulation panel, the document is perhaps noteworthy for all the things it leaves out.

Great expectations were held that the panel led by Dr Michael Vertigan would provide a rigorous dissection of the technical, social and economic elements that interweave to become the NBN. The key variables would be identified and by applying a range of values for them, the potential outcomes that outlined the NBN’s economic and social benefits would be identified.

Alas, that promise remains unfulfilled and the CBA is a superficial and flawed investigation of the rationale for the NBN and how it should be built.

Technical concerns abound

The NBN CBA attempts to guide the reader into believing that fibre-to-the-node (FTTN), hybrid fibre-coaxial (HFC) and fibre-to-the-premises (FTTP) provide similar capabilities. That’s not a fair comparison because both FTTN and FTTP cannot be high-speed broadband as one provides unreliable connections up to 100 Mbps and the other provides reliable connections up to 1 Gbps.

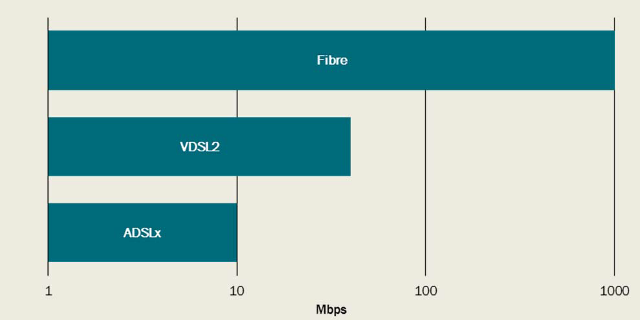

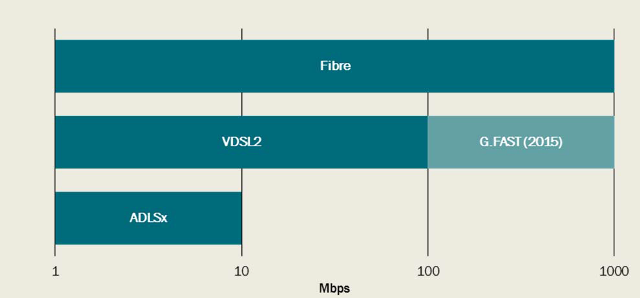

Broadband technology options pre 2012 and 2014

Pre-2014

The above charts (displayed as Chart C.3 on page 114 of the CBA), sourced from Alcatel-Lucent, state that in 2014 fibre provides up to 1 Gbps and gives the reader the impression that FTTN is comparable because in only a year’s time G.Fast will become available and provide a similar 1 Gbps outcome.

What the chart doesn’t show is that Alcatel-Lucent also provides 10G-PON, which is capable of providing 10 Gbps downstream and 2.5 Gbps upstream. Is that an innocent omission or has the chart been carefully drawn to make FTTN with G.Fast appear equal to FTTP?

Let’s not forget that FTTP connections provide the advertised speed while FTTN connections provide “up to” the advertised speed and often less than 25 per cent of FTTN connections will achieve a speed between 75 and 100 per cent of the advertised speed (CBA page 189).

One significant concern is that a life cycle cost and performance analysis was not carried out by a team of engineering experts and the data from the analysis is used to provide information that is either missing, sketchy or incorrect.

The congestion equation

The analysis of congestion on performance and customer satisfaction also gets short shrift. Where there is mention of demand during periods of high use, the effects of congestion are not adequately translated into the model. To see how and why this is important we need to look at the other technical concerns.

NBN Co’s Network Design Rules 2012 described, possibly for the first time by an Australian telecommunications company, how congestion was to be minimised for a class of traffic (TC1) to ensure that customer were satisfied with telephony services to be provided over the NBN. By effectively managing TC1 the flow on effect should be less congestion in the access network for all traffic classes.

The relationships between total network and link capacity, traffic class management, upload speeds and symmetric transmission requirements are not adequately covered in the CBA. Neither are the operational and maintenance costs, new applications and consumer expectations

While it’s natural for the CBA to be based on assumptions regarding how consumers use the internet each day -- how much data they consume and what applications they use -- it’s equally important to include the technical risk variables and assumptions.

- What is the cost of having to test every copper pair and what percentage of copper pairs will be found to be below minimum performance standards for VDSL2 with vectoring and when will the copper be remediated or replaced with fibre?

- What is the electricity cost for the FTTN cabinets and is there a risk associated with the availability and reliability of the power supply?

- Given the current pace of the rollout where will the additional rollout teams needed come from to complete the rollout?

A snapshot in time

The accuracy of these underlying assumptions is vital and more than one data set should be used to build and analyse the technical model prior to it being included in the CBA.

Section 2.2 of the CBA contains much of the material relied upon to build a usage and demand model. The material contained in Section 2.2 is a reworking of material prepared some time ago for a UK audience. Irrespective of its origins, the substance of the material is out of date and does not adequately reflect current knowledge of how the internet will change and grow in the decades ahead.

Yet Section 2.2 states that "a number" of Australian internet service providers were contacted "both to test the modelling methodology and to obtain (confidential) data necessary to implement the model".

“The completed model findings were then further tested with internet service providers who provided feedback and comments.”

We may never know which Australian internet service providers were involved but it would be very interesting to get their opinion now that Section 2.2 is in print.

The CBA is entirely based on the material provided in Section 2.2 and no alternative data sets are provided or used, which is unusual and places too high a reliance on data provided by an organisation that does not hide its scepticism of the need for fibre, ensconced in its belief that internet growth will be glacial over the next decade thanks to improved data compression techniques and that consumer expectations will be adequately met by existing applications.

Section 2.2 appears to be a snapshot in time, one that occurred about five years ago and the data refined to match the data set. The problem is that there is no qualitative and quantitative evidence that the data set is accurate, we are simply told to accept it as it is.

Static assumptions

When you read Section 4.1 to learn about the scenarios evaluated you cannot move past the rather audacious assumption that technologies should remain static for 26 years so that the model can provide results for a comparison.

A careful look at Section 4.1 (CBA page 46) highlights an assumption that the speeds for FTTN, HFC and FTTP used in the CBA model are “held constant over the period to 2040”. Table 4.6 on the same page provides assumed download and upload speeds for each technology.

This assumption appears to come from an earlier report and was savaged by technologists at the time, yet here it is again.

The use of static values for the download and upload speeds for each technology over the period to 2040 is nonsense. By 2040 regular and anticipated upgrades to FTTP would see users enjoy speeds of between 10-40 Gbps downstream and 4-8 Gbps upstream. There can be no justification for putting the world in a bubble.

Note 8 (CBA page 46) states “This is likely to be a conservative assumption as the achievable speeds are likely to increase over time for all technology options, often with minimal cost.”

This is technical nonsense that has no basis in reality.

We know that for FTTN to move to G.Fast or any future technology the copper will need to be shortened from the 500 meters used for VDSL2 with vectoring to about 100 meters or less. This will not be a “minimal cost” exercise but will require the rollout of fibre past premises. Even a HFC network would require substantial remediation and upgrade to achieve speeds beyond the 1 Gbps that is already available on FTTP.

What this means is the CBA model is very simplistic, utilises unjustifiable assumptions and does not appear to be capable of coping with even single dimensional variables changing over time.

Section 4.5 (CBA page 50) is interesting as it describes a FTTP rollout that conforms to the changed circumstances provided in the Coalition’s NBN-related strategic review. Not only is the timing for the FTTP rollout altered but also the location and extent of the FTTP rollout.

Section 4.6 (CBA page 50) does not include the TPG FTTB rollout as a scenario variation, which is disappointing as it would have been beneficial to see what the effect of TPG’s cherry-picking inner urban high-value multi-dwelling units would have on the other scenario outcomes.

The internet is dying?

Engineers learn at an early age that technologies go through life cycles, and in the early years a technology will have significant periods of technological change that result in major performance improvements, new features and rapid expansion.

Yet reading Section 2.2, it appears that the internet has reached end of life and all that is left is obsolescence and eventually a trip to the tip. The CBA relies on Section 2.2 to such a degree that even a minor deviation from the dire predictions of the internet’s future would make it nothing more than expensive fish and chip wrapper.

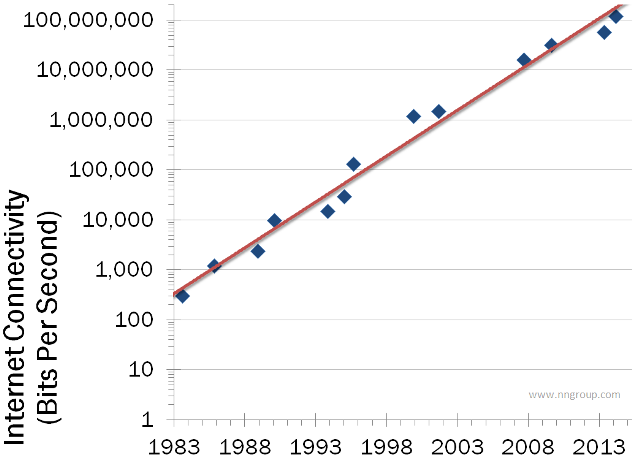

Sometimes data describing the future is found by looking backwards. Not always of course, but when you know that the future is a fibre access network then it is possible to see a time when consumers will have 10 Gbps, 40 Gbps and eventually 100 Gbps connections. To explain what is happening let us look at the anecdotal evidence provided by Nielsen’s Law of Internet Bandwidth.

Nielsen's Law of Internet Bandwidth

Source: Nielsen Norman Group

Nielsen plotted his internet connection speed beginning in 1983 and found a remarkable trend. As consumer demand grew for new applications telcos would upgrade or overbuild infrastructure, as a result Nielsen observed that his regular internet connection upgrades reflected the state of the internet.

He also noted that there were periods when internet capacity and connection speeds lagged behind his expectations but eventually caught up when telcos got the picture that customers were fed up and would look for another telco if an upgrade was not forthcoming.

So should Nielsen’s data predicting that his internet connection speed in 10 years will be 57 times faster than his 120 Mbps 2014 connection speed (about 7 Gbps) be used as the basis for the Australian NBN CBA?

The correct answer is not in isolation, for there are other data sources that could be used to provide a comparative analysis. Other data sources include Cisco’s Visual Networking Index, Ookla and Akamai. Data sources are only as good as the information collected and for this reason it is important that any reasonable study use several different data sources and publish the data used.

The CBA report includes data from the MyBroadband data cube v3 (CBA pages 100-101). The MyBroadband website uses non-standard definitions of quality and availability and is nothing more than a shallow attempt to justify the Coalition’s NBN plan.

Technology does not remain still for long and as I have highlighted previously we are in for an exciting time. Yet emerging technology and applications like UHD 8K television, PonoMusic, the Internet of Things and family high-definition video Skype calls hardly get a mention in the CBA.

In fact, Section 2.2 espouses a number of quite remarkable and unjustified assumptions.

“However, requirements may also fall. In particular, constantly improving video compression means that [for a given video definition] required bandwidth will decline.”

There’s no evidence that consumers will continue to accept the poor quality video and audio being streamed over the internet today. It only takes one vendor to break ranks and provide higher quality video and audio with the associated higher data rate and we could see a tsunami of data growth occur.

“Approximately 58 per cent of Australian households contain only one or two people. The average usage and bandwidth requirements of such households will, all other things being equal, be lower than that of larger households.”

This is a remarkable assumption that does not take into account, age, demographics, job or a myriad of other factors that correspond to how consumers use the internet now or in the future. The number of devices connected to the internet is about to go through a massive growth period and that means the capacity for additional data transmission will need to keep pace.

In a 2012 Gartner report on 10 Critical Tech Trends for the Next Five Years IT demand in 2017 will be characterised by network bandwidth demand growing by 35 per cent per annum and everything will have a radio, GPS and be connected to the network.

Yet Chart 2.2 (CBA page 34) builds upon Chart 2.1 (CBA page 33) in an attempt to paint a picture that by 2023 only the “top 1 per cent of households have demand of 45 Mbps or more”.

The argument for the unjustifiably low figures in Section 2.2 comes crashing back to earth when the authors attempt to brush off published research that disagrees with their assessment.

“The figures of 15 Mbps and 43 Mbps for median and top 5 per cent of demand may seem low, particularly by comparison to the results of some other research in this area. However, the most common type of household comprises just two people. Even if those two are each watching their own HDTV stream, each surfing the web and each having a video call all simultaneously, then [in part thanks to the impact of improving video compression] the total bandwidth [in 2023] for this somewhat extreme use case for that household is just over 14 Mbps. “

By 2023, HDTV will be old technology, video calls will be HD quality or higher and video compression may do nothing more than normalise the rate of increase in bandwidth requirement for whatever is the most common streaming format will be for the time. That could be 16K or 4K with 3D and might also include multi-screen capability.

If anything, the bandwidth estimates provided in the CBA, if taken on face value, should be a cause for alarm.

It was anticipated that the 2013 report by Ericsson, Chalmers and Arthur Little titled Analyzing the Effect of Broadband on GDP would have found its way into the reference list. Possibly this study was not included because if found that increased broadband speeds would have a substantial effect on GDP? The Ericsson report concludes that “doubling broadband speeds for an economy can add 0.3 percent to GDP growth”. The CBA assumes a figure that is substantially lower than that identified in the Ericsson report.

The telco effect

The CBA report does not adequately consider the “telco effect”, which is something that Australian consumers have had to put up with for decades. This consists of drip-feeding increases in capacity and download speeds and prices that limit consumer uptake of improved plans. Any analysis based on the Australian telecommunications market needs to account for this dynamic because the drip-feeding of capacity and download speed increases while maintaining tight control over price has artificially stunted internet growth in Australia for the past decade.

On the rare occasions when new infrastructure has been rolled out, such as the 3G and 4G networks there has been rapid increase in data usage, far beyond what is predicted in the CBA’s assumptions.

What would happen if every Australian was provided with 1 Gbps FTTP with 500-1000 GBytes per month data allowance today? Should we believe, as the CBA tells us to, that the average Australian household would utilise less than 50 Mbps and only a fraction of the data allowance in 2023?

Beyond 2024

The CBA scenarios assume the FTTP rollout would be completed in 2024 and the modelling effectively freezes technology, capacity and speed to match the initial rollout characteristics, meaning technology upgrades are discounted and if an upgrade was to occur then Note 8 applies and no relative change occurs.

FTTP rollout today would provide infrastructure that has a 50-to-80-year life that can be easily and cost-effectively upgraded from G-PON to 10G-PON to 40G-PON and so on. The lifetime of VDSL2 with vectoring and the existing HFC is 5-10 years at most so why bother?

The CBA provides the outcome it was designed to deliver despite its failure to adopt a reasonable underlying technical model and data set. The failure to include a life cycle cost and performance analysis effectively negates the opportunity for informed debate around the merits or otherwise of the CBA’s outcomes.

Participants in the NBN debate wanted to see a detailed and accurate analysis of the NBN that was based on credible and justifiable data and assumptions, but unfortunately this important opportunity has been lost.

Mark Gregory is a Senior Lecturer in the School of Electrical and Computer Engineering at RMIT University